Loading...

Loading...

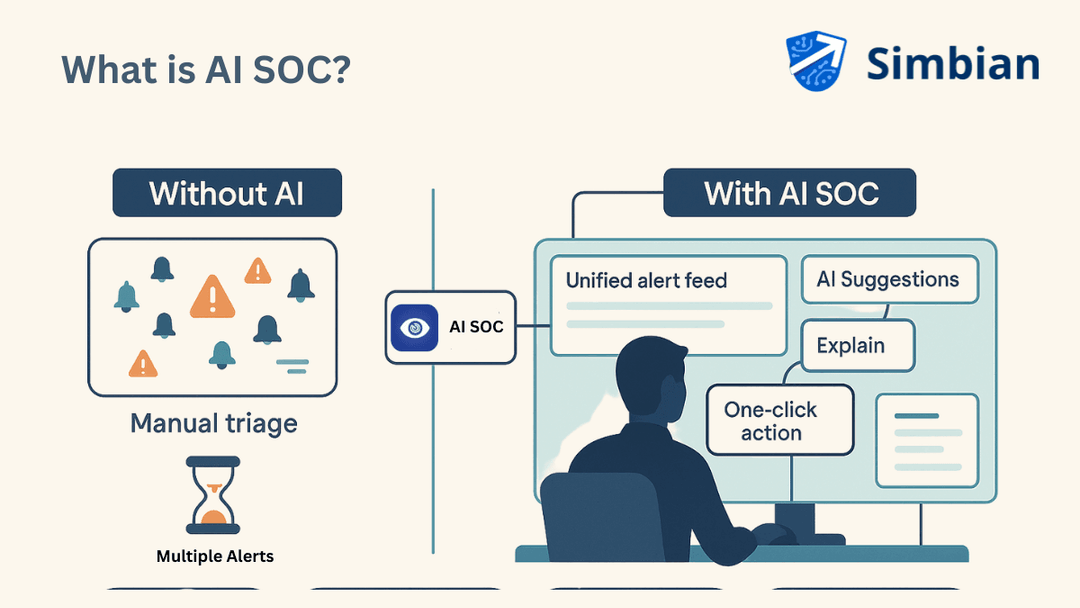

If you’ve been wondering what an AI SOC is, here’s the simple version: an AI SOC (AI-powered Security Operations Center) is a modern operating model where autonomous, “agentic” AI systems assist human analysts by triaging alerts, correlating signals, running investigations, and even executing bounded responses—24/7. Instead of drowning in noise, your team sees fewer, higher-quality, risk-prioritized incidents and spends most of its time on strategic defense and threat hunting. Recent write-ups on “intelligent SecOps” capture this shift well, highlighting how AI enhances triage, context enrichment, and response to reduce false positives and accelerate MTTR.

A practical way to think about it: AI SOC agent sits on top of your telemetry (SIEM/XDR), knowledge (asset/identity/business context), and playbooks (SOAR/ITSM), then reason over the graph of what’s happening to decide what matters now and what to do next. Multiple industry explainers echo this definition, describing AI SOCs as SOCs that apply AI to automate processes, speed up investigations, and strengthen decisions.

Traditional SOCs are manual and siloed; analysts swivel between tools and react to floods of alerts. AI SOCs add agentic reasoning that correlates evidence, understands relationships across entities, and continuously learns from outcomes. Multiple vendors and primers emphasize that the aim isn’t to replace analysts but to elevate them—moving from whack-a-mole to proactive defense and threat hunting.

Agentic AI isn’t just a fancy script. Agents plan, reason, and act toward goals like “triage this alert,” “correlate related events,” or “contain this endpoint.” Modern overviews describe specialized SOC agents that ingest telemetry and history, then produce actions or recommendations for humans in the loop.

A well-built AI SOC scores alerts by actual business risk, not just signature severity—combining threat intel, identity and asset criticality, and environmental context—so only the incidents that truly need human expertise surface to your queue.

Every closed investigation is a training signal. Over time, the system adapts to your unique environment and improves accuracy—so “noisy” detections get smarter without adding analyst toil.

Before you deploy agents, ensure clean pipes. You’ll integrate with your SIEM (telemetry + rules), XDR (endpoint/network detections), SOAR (playbooks), and ITSM (ticketing/approvals). Clear explainers outline the different roles each tool plays and how they overlap.

Measurable Outcomes & Benchmarks

MTTA/MTTR: target 30–70% reductions by automating enrichment and standard responses.

False Positive Rate: aim for step-function drops by adding identity/asset context and behavior analytics. Some vendors report “90% reduction in false positives,” but treat this as a hypothesis until your pilot proves it.

Analyst Hours Returned: measure time saved per use case (phishing triage, EDR outbreaks, SaaS anomalies).

Coverage: use ATT&CK Navigator to evidence which TTPs you detect today vs. after rollout.

What to Ask Vendors (and Why)

Models & guardrails: jailbreak resistance, prompt-injection mitigations, policy checks.

Data handling: where data lives, residency, retention, model training boundaries.

Integrations: named connectors (e.g., Splunk, Sentinel, CrowdStrike) and supported actions.

Metrics: precision/recall, false-positive reduction, MTTA/MTTR deltas on your data.

Autonomy controls: approval tiers, rollback, audit trails.

An AI SOC isn’t science fiction—it’s a disciplined operating model driven by agentic AI, graph reasoning, and strong governance. Start small, measure everything, and scale the wins. If you came here asking What is AI SOC, the next step is simple: pick three high-volume incident types, stand up a shadowed agent, and prove the delta on your telemetry.